Distributional Reinforcement Learning

Alberto Maria Metelli

DEIB Ph.D. Student

DEIB - Seminar Room

November 21st, 2017

10.30 am

Research Line:

Artificial Intelligence and Robotics

DEIB Ph.D. Student

DEIB - Seminar Room

November 21st, 2017

10.30 am

Research Line:

Artificial Intelligence and Robotics

Abstract

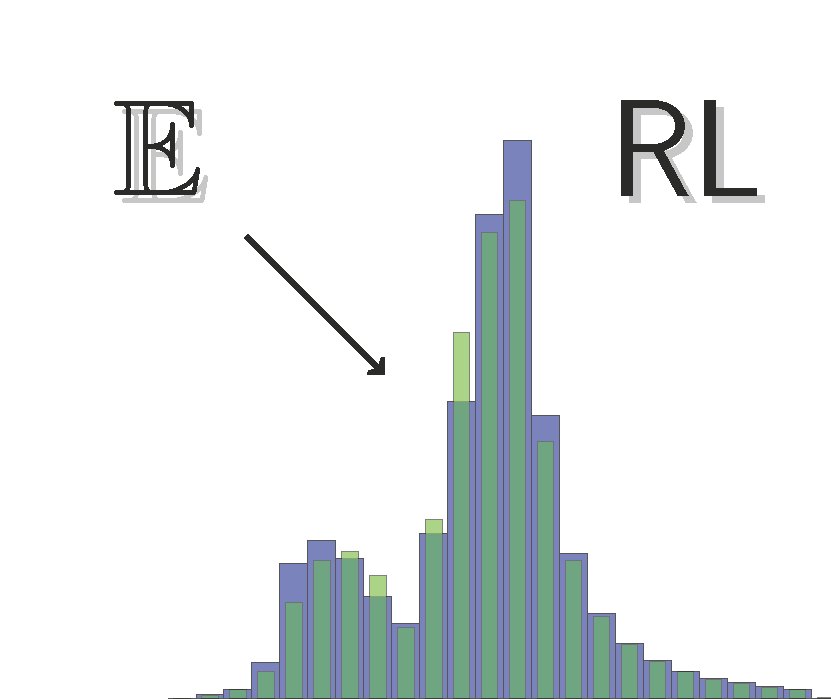

The classic approaches to Reinforcement Learning aim to represent the performance of a policy by means of its expected cumulative reward. This information, although synthetic and simple to treat, does not account for the complex and possibly multimodal nature of the reward distribution. In this seminar, we present the approach proposed by M. G. Bellemare, W. Dabney and R. Munos in “A Distributional Perspective on Reinforcement Learning” (ICML 2017) in which the distribution of the cumulative reward is explicitly represented. We start rephrasing the policy evaluation problem into the distributional framework, adapting the Bellman operator and showing the strong analogy with the non-distributional case. Then, we analyze the control problem highlighting the fundamental theoretical differences w.r.t. the classic approach. We present the experimental evaluation performed on the Atari games suite that proves the effectiveness of the approach, able to outperform the state-of-the-art deep reinforcement learning techniques, despite its theoretical limitations. Finally, we provide some interpretations of this unexpected good performance in terms of regularization properties.